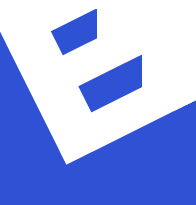

From Simulation to Human-Centric Digital Twins

In a recent webinar developed in collaboration with AnyLogic, we explored a question that many engineers quietly struggle with:

Why do our simulation results look perfect… but reality doesn’t?

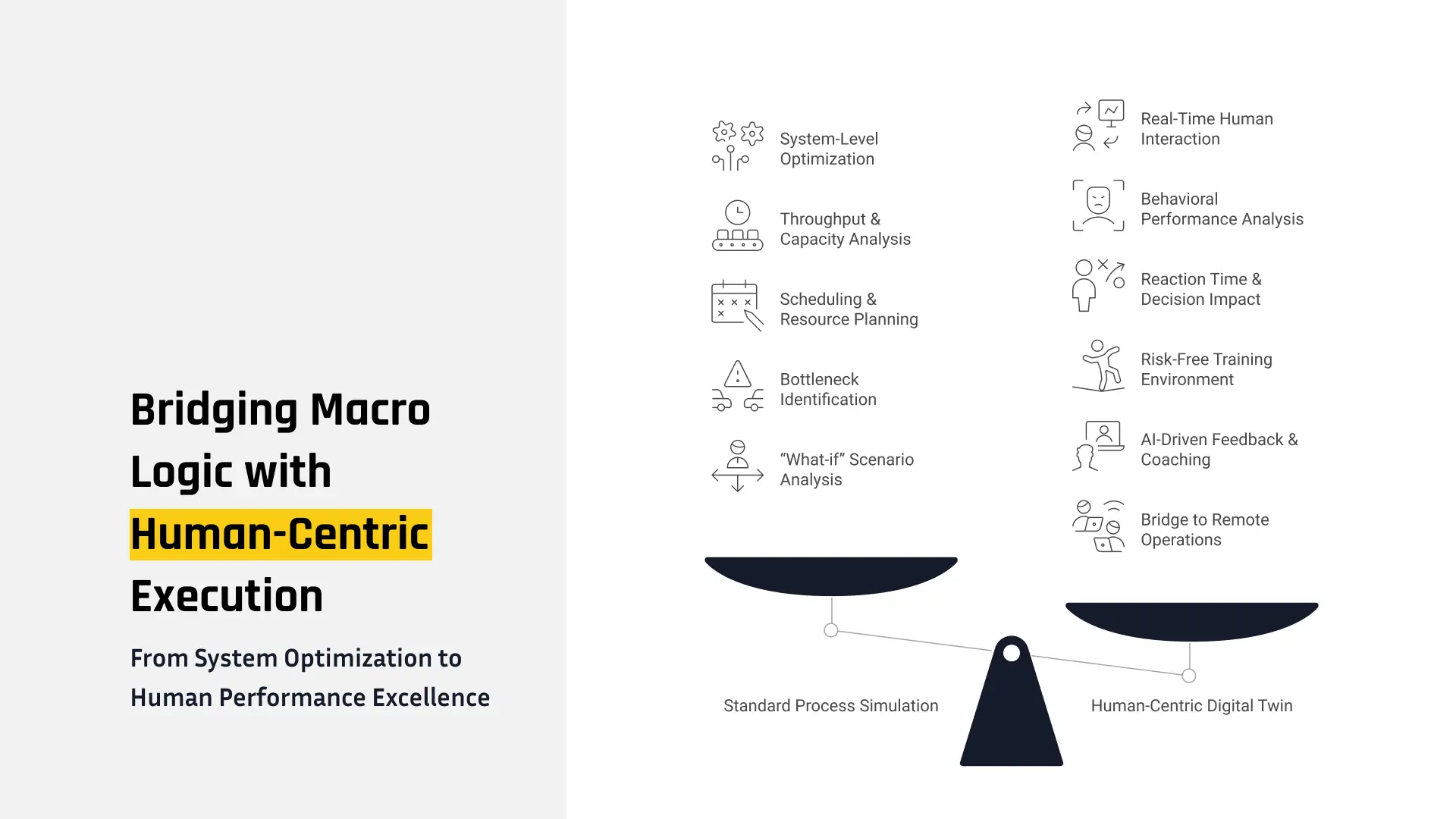

For years, process simulation has been a powerful tool. It helps us design systems, optimize throughput, and predict performance. But there’s always been a gap — a subtle one, but critical. That gap is human behavior.

The Problem We Don’t Usually Model

Most simulation models assume that everything works exactly as planned. Machines operate on schedule, materials flow smoothly, and most importantly, operators behave consistently. But in real industrial environments, that’s rarely the case.

A slight delay in handling material, a small hesitation in decision-making, or a different execution pattern by an operator can ripple through the system. What starts as a minor variation can turn into congestion, idle time, or even a full stop in the production line. This is exactly where traditional simulation falls short — not because it’s wrong, but because it’s incomplete.

Moving Toward a Human-Centric Digital Twin

Instead of treating human behavior as something external to the model, we asked a different question:

What if we bring the human into the simulation?

This idea led us to develop a Human-Centric Digital Twin — a model where system logic and human interaction exist together, not separately. In this approach, we don’t just simulate how the system should work.

We simulate how it actually works — with human decisions, timing variations, and real-world imperfections.

A Real Industrial Case: Continuous Homogenizing Line

To make this concept concrete, we applied it to a Continuous Homogenizing Line used in aluminum production.

On paper, this is a highly automated system. Materials move through heating, holding, and cooling stages in a continuous flow. Everything seems predictable. But in reality, certain parts of the process still depend on operators — especially material handling and timing coordination. And that’s where things get interesting. Even small differences in how an operator performs a task can influence the entire system. A delay in removing a defective billet, or a mismatch in transfer timing, can create back-pressure that affects upstream and downstream processes.

In other words: The system may be automated — but its performance is still human-influenced.

Turning Simulation Into a Digital Laboratory

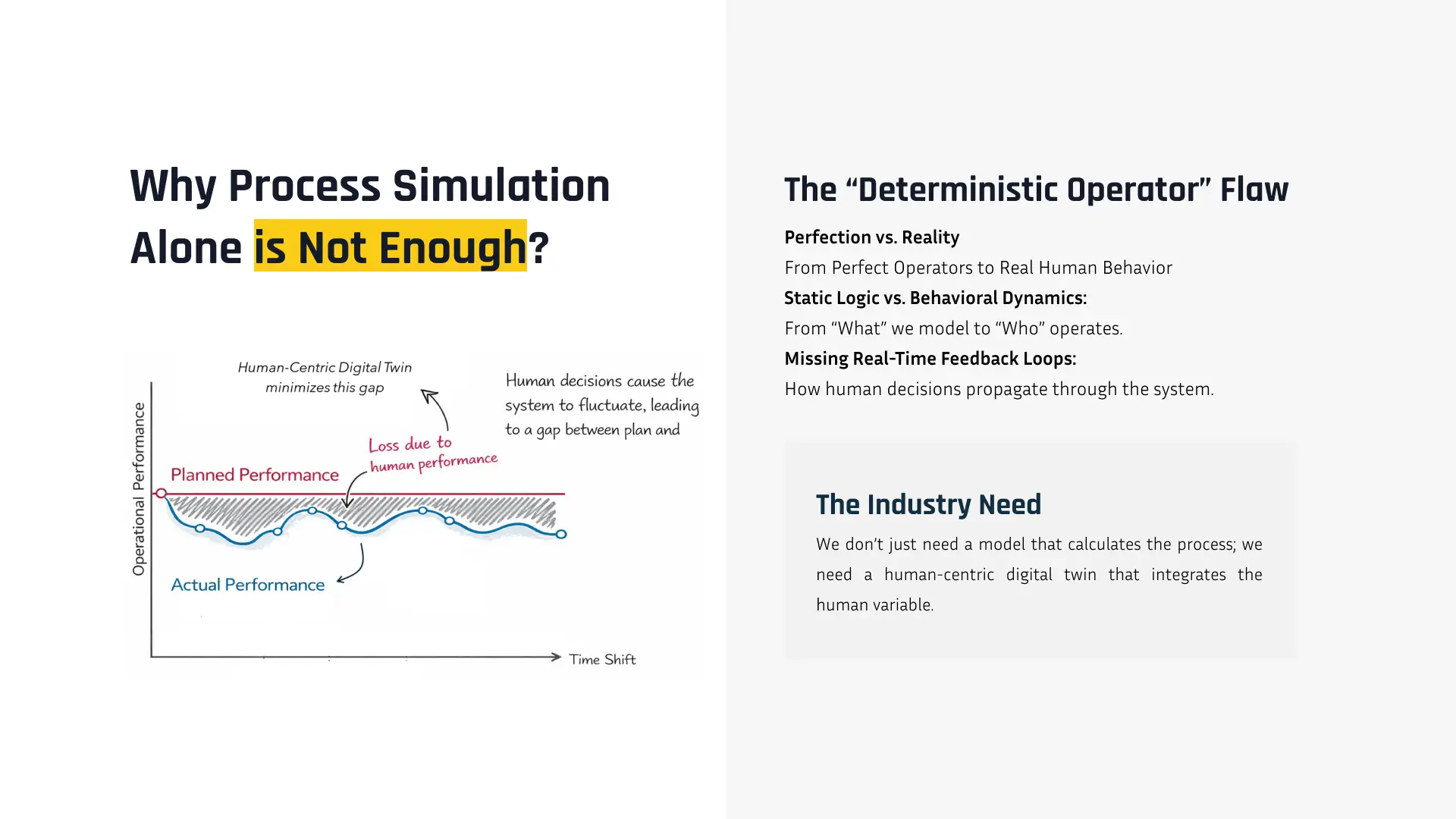

One of the key capabilities we demonstrated in the webinar is using the model as a kind of digital laboratory.

Instead of running a single scenario, we can experiment. We can change arrival patterns, adjust furnace timing, modify quality rates, or tweak how material handling works. Even sustainability aspects like energy consumption and CO₂ emissions can be analyzed within the same environment. This gives engineers a powerful way to explore “what if” scenarios before anything happens in the real system. But there’s still something missing.

Understanding the system when everything works perfectly is useful — but reality is rarely perfect.

When Humans Enter the Loop

This is where training mode comes in. We designed the system so that operators can actually interact with the model. Not in an abstract way, but in a realistic, experience-driven environment. To make this possible, we introduced physical behavior into the simulation. Objects don’t overlap unrealistically, movements follow constraints, and interactions feel closer to real-world conditions. We also added guidance mechanisms — visual cues and structured scenarios — to help operators learn how to perform tasks more effectively. At this point, the digital twin stops being just a model. It becomes something else:

A space where people can learn, make mistakes, and improve — without risk.

The Role of AI: Making Sense of the Data

As you can imagine, combining simulation and training generates a large amount of data. And data alone isn’t enough. What we really need is a way to understand it. This is where AI comes in — not as a replacement for humans, but as a layer that helps interpret what’s happening. It analyzes system performance, detects inefficiencies, identifies patterns, and even compares how different operators perform under similar conditions. But more importantly, it turns all of this into something usable:

Not just numbers, but explanations. Not just reports, but guidance.

Making It Visible: Omniverse and Immersive Interaction

Another key part of this work is visualization. By integrating with NVIDIA Omniverse, we move beyond traditional 2D views. The system becomes something you can see, explore, and even step into. Different users can interact with the same digital twin from different perspectives — an operator view, a monitoring view, or an analytical view. And with VR, the experience becomes even more direct.

You’re no longer observing the system.

You’re inside it.

Looking Forward: From Digital Twin to Operation Platform

One of the ideas we discussed in the webinar — still in development — is extending this approach toward remote operation. Imagine a system where real-time data flows into the model, and operators interact with it remotely. The digital twin becomes not just a representation, but an interface to the real system. We’re not fully there yet. But the path is becoming clearer.

So… Why Does This Matter?

Because the future of industrial systems is not about choosing between humans and AI. It’s about combining them.

- AI can analyze.

- Automation can execute.

- But humans still decide, adapt, and respond.

A Human-Centric Digital Twin is where all of these come together.

Watch the Full Webinar

If you’d like to see the full system, demos, and explanations, you can watch the webinar here: